Enterprise Search in the AI Era: What It Is and How It Differs from "Chat Over Docs"

Enterprise search turns fragmented company knowledge into a secure, measurable system that goes far beyond a simple chat over docs bot.

Enterprise search is supposed to feel effortless: type a question, get the right answer, move on. But in many organizations, finding the “right” file, policy, or decision still means hopping across tools, guessing keywords, and DM’ing coworkers. In a well-known McKinsey Global Institute analysis, “interaction workers” were estimated to spend 19% of their workweek simply trying to track down information.

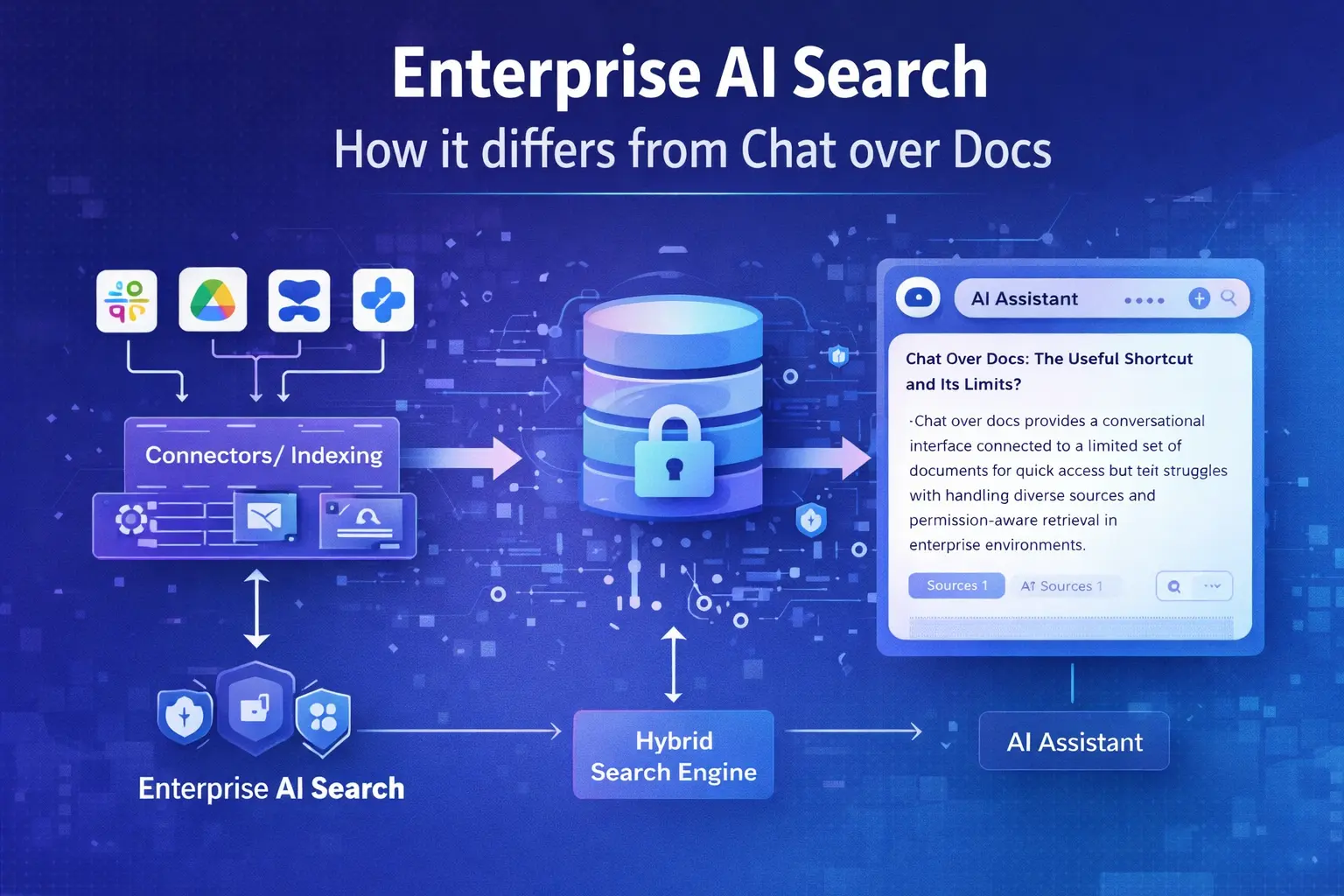

This is why “AI search” suddenly sounds like the cure-all. Yet there’s a big difference between a quick “chat over docs” bot and a true AI enterprise search platform. One can be a useful prototype; the other is an internal search engine designed to work at enterprise scale, with security, governance, relevance tuning, and measurable quality over time.

Enterprise AI Search Explained

Enterprise search, workplace search, internal search engine: the same problem, different angles

At its core, enterprise search is the retrieval of relevant information from disparate sources across an organization. Modern enterprise search engines are described as specialized tools that index, search, and retrieve information from internal repositories (documents, emails, databases, intranet sites, and other enterprise data sources), often using NLP/ML to improve the experience.

You’ll also hear two adjacent terms that matter for positioning:

Workplace search is essentially enterprise search viewed from the employee experience side: a single “front door” to knowledge across the digital workplace (intranet, collaboration tools, knowledge bases, ticketing systems, file shares). This framing emphasizes speed, discoverability, and reducing time wasted navigating silos.

An internal search engine is enterprise search viewed as infrastructure: connectors + indexing + ranking + security + analytics. It suggests a platform you can embed into multiple user experiences (a search bar, a help center, a chatbot, a support console) instead of a single UI.

The key point: these aren’t separate categories as much as different ways to describe the same organizational need.

What makes it “enterprise AI search” (not just enterprise search)

The phrase AI enterprise search generally implies that the system does more than return a list of documents; it also understands intent, ranks results using richer signals, and can synthesize answers while staying grounded in source content. That’s very close to how Gartner defines enterprise AI search: platforms that enable retrieval and synthesis of information across enterprise repositories, serving as a key technology for AI assistants and agents that use retrieval-augmented generation (RAG).

Gartner’s description also highlights what “enterprise-grade” implies in practice: these platforms connect to many sources, normalize/classify content, index it, and match/rank the most relevant results—and they’re designed to be customized and tuned for domains and operational use cases.

Why this category exists now

Two forces converged:

First, organizations are still fighting fragmentation. In an IDC study (commissioned by a search vendor but authored by IDC), knowledge workers were reported to spend about 16% of their time searching for information; the same study reports they find what they need only 56% of the time, and that roughly a quarter of time can be consumed by finding and analyzing information.

Second, generative AI changed user expectations: people increasingly want a direct answer, not ten blue links. But generating answers safely inside a company requires retrieval, permissions, and governance—as infrastructure—not as a prompt-time workaround.

Chat Over Docs: The Useful Shortcut and Its Limits

What “chat over docs” usually means

“Chat over docs” typically refers to a conversational interface (an LLM chat) connected to a limited set of documents—often a shared folder, a subset of a wiki, or a curated knowledge base. The standard approach is some form of retrieval-augmented generation: retrieve passages from external text, then condition the model’s output on that retrieved content.

This pattern is grounded in well-cited research: the original RAG work describes models that combine parametric memory (the model) with non-parametric memory (an external index) to improve factuality and access knowledge that isn’t (or shouldn’t be) “baked into” the model’s weights. A practical industry explanation from NVIDIA similarly frames RAG as a way to improve responses by retrieving from external documents instead of relying on the model’s internal memory alone.

So: chat over docs is not “wrong.” It’s often the fastest way to validate that employees want conversational access to internal knowledge.

Why chat over docs often fails to become “enterprise search”

Where chat over docs struggles is not the chat. It’s everything around retrieval and trust.

A true enterprise AI search platform is expected to connect to many systems, normalize and classify information, index it, and consistently rank the most relevant results. A workplace-scale system must also provide tuned relevance that improves over time, scale to many end-users, maintain fast response times, support configurable experiences, and provide analytics that help improve outcomes. These are characteristics of a search platform—not a quick chatbot integration.

In addition, enterprise environments introduce the hardest requirement: permission-aware retrieval. If an employee asks a question in a chat window, the system must only retrieve and expose content they are authorized to see. This isn’t a “nice to have”; it’s core to enterprise safety and adoption.

Chat over docs prototypes often hit one (or more) predictable walls:

That’s why “chat over docs” is best considered a subset of enterprise AI search: a UI that still depends on enterprise-grade search foundations.

The Practical Differences: A Side-by-Side Comparison

The simplest way to separate the categories is this: chat over docs is an application; enterprise AI search is a platform. The table below synthesizes (a) Gartner’s definition of enterprise AI search as retrieval + synthesis across repositories and as a foundation for RAG-based assistants/agents, (b) common RAG architecture described in the original RAG paper and industry explainers, and (c) the kind of platform features and operational controls associated with enterprise search systems and cloud search platforms (connectors, security trimming, personalization, analytics, NLU).

| Dimension | “Chat over docs” (typical) | Enterprise AI search (platform) |

|---|---|---|

| Primary goal | Answer questions over a limited document set | Retrieve, rank, and synthesize across enterprise repositories |

| Content coverage | Usually a subset (a folder, a wiki space, a KB) | Designed for “wide coverage”: many systems + normalization + classification |

| Retrieval quality | Often “good enough” for demos; less tuning | Tuned relevance, hybrid retrieval, and continuous improvement via analytics |

| Security model | Frequently fragile outside simple setups | Security trimming, ACL-aware indexing, identity/group expansion, least privilege alignment |

| Freshness | Manual or periodic; easy to fall behind | Connector-driven updates, controlled indexing, policies for lifecycle and governance |

| UX | A single chat surface | Multiple experiences: search bar, verticals, answer cards, chat/assistant, API integrations |

| Operability | Hard to measure beyond anecdotal feedback | Built for measurement: dashboards, logs, “zero-result” analysis, quality evaluation loops |

| Outcome | Helpful personal assistant for a narrow corpus | A durable internal search engine that powers workplace search and AI experiences |

If you’re choosing between the two, a practical rule of thumb is:

Under the Hood: How Enterprise AI Search Works

Enterprise AI search systems look simple to users (“just type a question”), but the architecture is closer to a data platform than a chatbot. Four layers matter most.

Connectors and ingestion: where enterprise projects actually succeed or fail

The enterprise reality is that knowledge lives everywhere: document systems, CRMs, ticketing tools, knowledge bases, intranets, chat transcripts, and more. Enterprise search solutions are explicitly described as gathering information from internal sources and organizing it into searchable indexes.

This is why connectors are first-class citizens in enterprise AI search. For example, Microsoft describes its connectors as extending search and Copilot experiences to data beyond Microsoft 365, including both synced connectors (indexing external data) and federated connectors (real-time connection, early access preview). That distinction matters: indexing improves speed and relevance tuning; federation can be useful when data cannot be copied or must remain strictly in the source system.

Similarly, Cloud Search connector documentation from Google indicates that connectors submit items with metadata and access control lists (ACLs)—a clear signal that enterprise search ingestion is tightly coupled to permissions.

The “connector layer” is also where enrichment happens: language detection, metadata extraction, entity recognition, classification, and de-duplication. In a cloud-search platform feature summary from Accenture, capabilities such as ingest transform pipelines, NLU, and connector frameworks are part of the assumed baseline.

Indexing and retrieval: hybrid is the new baseline

Indexing is more than “store embeddings.” Enterprise search platforms build indexes optimized for retrieval, ranking, filtering, and governance.

Modern enterprise AI search typically relies on hybrid search, combining lexical keyword matching with semantic/vector retrieval. Elastic’s explanation frames hybrid search as blending lexical (often BM25-style) and semantic vector search into a unified ranked list, using fusion strategies to improve relevance/recall. OpenSearch documentation similarly describes keyword search (BM25) as the default scoring method and positions semantic/hybrid search as necessary when understanding meaning and context matters.

OpenSearch’s hybrid search documentation also emphasizes an operationally important detail: hybrid retrieval often uses a search pipeline at query time to normalize and combine scores from different retrieval methods. And Elastic’s hybrid search materials explicitly connect this to evaluation quality metrics (for example, mentioning NDCG improvements) and common fusion approaches (such as reciprocal rank fusion).

This hybrid layer is what helps enterprise search handle real-world queries like:

Security and governance: permission trimming is not optional

Enterprise AI search must enforce access controls consistently. The principle that access should be restricted to the minimum necessary (“least privilege”) is explicitly defined by NIST in its glossary. Translating that into search means: if the user doesn’t have access to a document, it must not appear in results—and must not be used to generate an answer.

That’s why enterprise tooling talks about security trimming and ACL-aware indexing as baseline features. Accenture’s cloud search platform feature list defines security trimming as ensuring users can only view documents they have permission to access, and it separately calls out group expansion/identity services for permission evaluation.

Major ecosystems operationalize this through ACL propagation and identity mapping:

If you’re building AI answers, the same rule applies: “permission-trimmed answers” are increasingly described as a requirement for secure enterprise chat experiences.

Synthesis: answers are a feature, not the foundation

Enterprise AI search often includes generative “answering,” but the safest framing is: generation is downstream of retrieval. Gartner’s definition is explicit that enterprise AI search enables retrieval and synthesis across repositories and is key for RAG-based assistants and agents. IBM’s enterprise search overview similarly frames modern enterprise search as incorporating generative AI and RAG approaches while warning about hallucinations and positioning RAG as a mitigation technique.

In practice, mature enterprise AI search experiences usually combine:

That “multi-modal UX” is one of the clearest differences from chat over docs, which often collapses the experience into a single conversational output.

How to Choose an Enterprise AI Search Platform

The selection criteria should reflect what enterprise AI search is: a platform for retrieval + ranking + synthesis across repositories, designed to be customized and tuned, with prepackaged integrations and domain-specific experiences.

A practical selection checklist

Use the following as a “shortlist filter.” It’s intentionally outcome-oriented and maps directly to what industry sources describe as core platform capabilities (connectors, indexing, ranking, NLU, personalization, analytics, security trimming).

How to evaluate vendors without getting trapped in demos

A real enterprise AI search evaluation is closer to an engineering benchmark than a marketing demo—because relevance and permissions don’t reveal themselves in scripted scenarios.

A strong proof-of-value typically includes:

If you can’t measure improvements (even with simple metrics like “time to first useful result,” “zero-result rate,” or task completion time), you’ll struggle to justify investment and to run search as an ongoing capability. The APQC description of modern enterprise search explicitly frames analytics and continuous improvement as part of how modern search delivers value.

Common red flags

These are patterns that frequently signal you’re buying “chat over docs with a bigger price tag” instead of enterprise AI search:

Conclusion

Enterprise AI search turns fragmented corporate knowledge into a secure, measurable, improving system—while chat over docs is often a helpful starting point. If you want accurate answers that respect permissions, stay fresh, and scale across tools, invest in enterprise search foundations first. Ready to see it live? Book a demo.

Meta description: Enterprise search explained: how enterprise AI search surpasses chat over docs—with security, relevance, and governance. Book a demo.